Data Integration: The engine behind modern decision-making

Modern businesses run on data that can exist anywhere, in SaaS applications, databases, data warehouses, legacy systems, and even files. Each holds value to the business, but isolated, they tell only a fragment of the story.

Data Integration is the tool that brings these fragments together. It creates a single, consistent picture. A foundation that is complete and accurate ready for analytics, operations, innovation, and AI. It is the discipline that ensures every decision is made on complete, trusted information.

Effective integration combines three essential capabilities

Movement

Securely capturing and synchronising data across cloud and on-prem environments in real time.

Transformation

Converting raw data into clean, governed, and analysis-ready formats that align with business logic.

Orchestration

Automating the flow of data through every stage from ingestion to delivery with visibility and control.

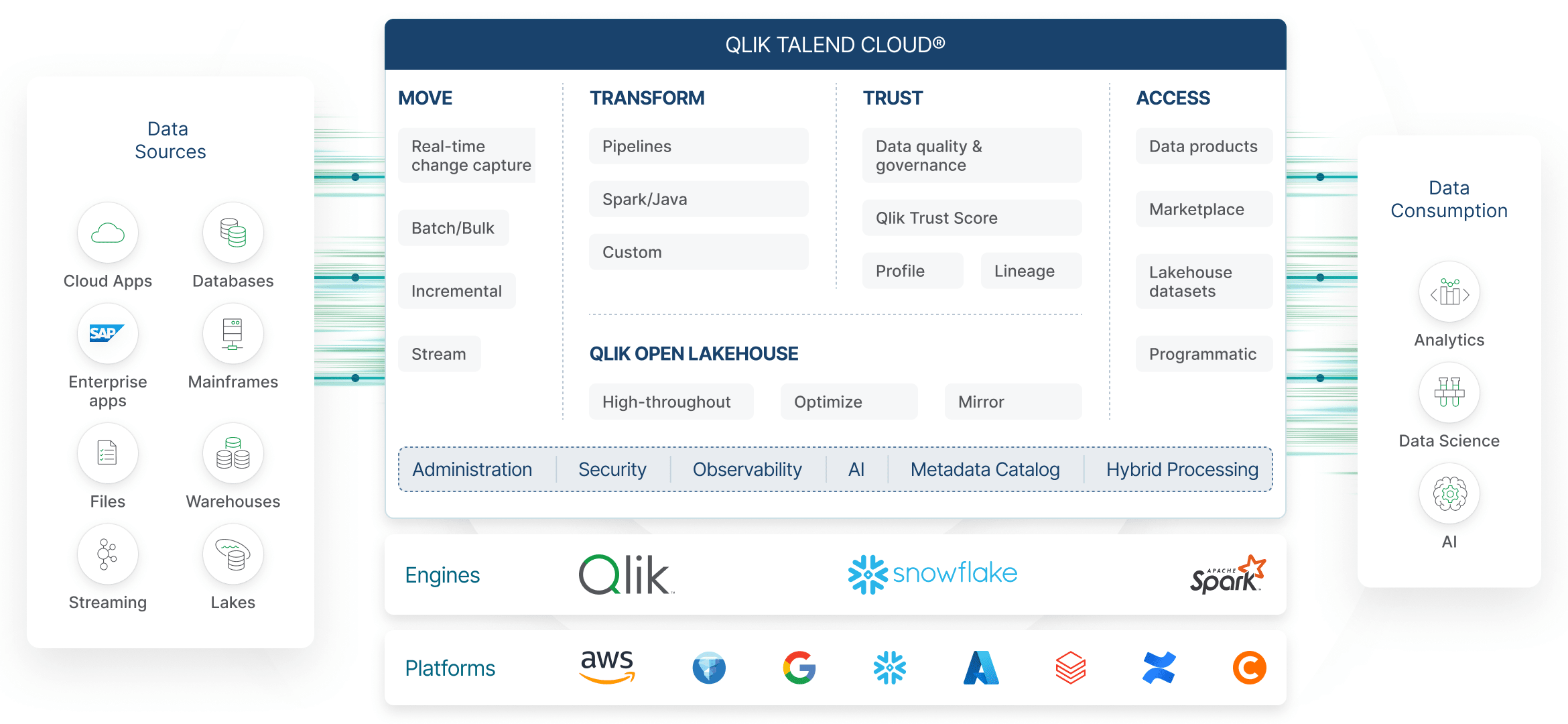

Qlik Talend Cloud Components

Modern data estates demand more than movement and transformation. They require a tool that allows the organisation to work faster, govern better, and prepare for AI at scale.

By unifying data from every part of the organisation, integration moves beyond process and becomes the mechanism that converts complexity into insight. Qlik Talend Data Integration was built to harness that power: to move, transform, and deliver trusted data wherever it’s needed.

Qlik Talend Cloud (QTC) offers components that elevate it from just an integration tool into an Enterprise platform.

Data Quality & Governance

Ensuring every dataset is accurate, compliant, and trusted across the business.

AI-Ready Data

Preparing reliable, well-structured information that powers analytics, prediction, intelligent automation, and the emerging world of Retrieval-Augmented Generation (RAG), where models can answer questions using your own governed data.

Advanced Storage with Iceberg

Leveraging open table formats like Apache Iceberg to store large-scale data efficiently, enable time-travel, support ACID transactions, and create a future-proof foundation for analytics and AI workloads.

Business value of Modern Data Integration

Modern organisations expect their data platforms to deliver clarity, control, and confidence, turning fragmented or slow data into a reliable business asset. As businesses become more data-driven, the challenge is no longer access to data but managing it in a way that limits operational risk, supports confident decision-making, and reduces complexity.

Qlik Talend Cloud addresses these challenges by providing enterprise-grade governance and transparency across the entire data journey. Built-in stewardship empowers business owners to validate and certify data, creating shared responsibility for data quality and strengthening trust across the organisation.

By centralising how data is managed, prepared, and governed, Qlik Talend Cloud helps organisations simplify complex data landscapes and establish a dependable foundation for insight, analytics, and innovation.

Tangible Business Benefits

Speed

Fresh, ready-to-use data reaches decision-makers faster, without waiting for manual processes or overnight batches.

Outcome: quicker decisions, faster response to change, and fewer operational delays.

Trust

Built-in governance, oversight, and certification processes ensure accuracy, consistency, and accountability.

Outcome: confident reporting, fewer errors, and better strategic decisions.

Agility & Scalability

As your business evolves, Qlik Talend Cloud adapts, supporting growth, new platforms, and new requirements without disruption.

Outcome: the freedom to modernise, expand, or pivot without costly rework.

Want to experience the difference in your organisation?

Start your free 14-day trial today.

Want to see a demo?

See how we helped the design and manufacturing company AESSEAL to massively reduce the time it took to feed the Oracle data warehouse from SAP, from an overnight data transfer process to just minutes with Qlik’s Data Integration platform.

The webinar covers

- How AESSEAL cut data transfer time by almost 99% with Qlik Replicate.

- Discover how Qlik Replicate unites multiple data sources seamlessly.

- Learn how automation streamlines your data pipeline and speeds up reporting.

- Watch the platform in action and see data integration made easy.

Platform Overview

Qlik Talend Data Integration brings together Qlik’s real-time data movement and Talend’s transformation, quality, and governance into a single platform that supports both modern cloud architectures and existing on-premises.

Cloud-native and hybrid ready

Qlik Talend Cloud runs in the cloud while providing secure, seamless connectivity to on-prem systems through the Data Movement Gateway. Data movement can operate in the cloud, on-prem, or as a hybrid solution depending on your architecture and requirements.

Broad Connectivity

Qlik Talend Cloud supports a wide range of connectors for on prem database systems (relational and NoSQL) as well as cloud platforms (AWS, Azure, GCP), messaging systems (Kafka), SaaS Applications (Salesforce, Marketo), and more.

Future-proofed Architecture

Open by design for lakehouses, AI, and next-generation automation. Qlik Talend Cloud supports open table formats, Iceberg-ready storage, and governed data for a variety of business use cases.

Talend Studio

Developers have full control with this robust, on-premises design environment. It is positioned for complex data workflows, custom logic, advanced transformations, published APIs and more.

The Essentials of Qlik’s Unified Data Integration Platform

With these capabilities combined, Qlik Talend Data Integration delivers a complete, unified approach to enterprise data. The platform is best understood through ten core areas that underpin everything it does.

1. Data Streaming

Qlik Talend delivers real-time data capture across databases, SaaS platforms, and legacy systems using high-performance CDC. It streams inserts, updates, and deletes continuously with minimal load on operational systems. This ensures every downstream process receives the freshest data the moment it changes.

2. AI

AI is becoming central to how organisations build and consume data platforms. Qlik Talend Cloud integrates AI capabilities throughout the lifecycle, from assistants that help generate SQL and pipeline logic to Knowledge Marts that support modern AI and RAG workloads. This ensures AI initiatives are powered by governed, high-quality data rather than fragmented or inconsistent sources.

3. Unified Data Movement

The platform supports all forms of data movement: batch, CDC, ingestion, replication, and streaming, across cloud and on-prem environments. It scales effortlessly to handle high-volume pipelines without manual tuning. This gives organisations a single, consistent approach to moving data wherever it’s needed.

4. Data Transformation

Qlik Talend Cloud supports both ELT and ETL, offering visual design tools, code-based flows, AI SQL Assistant, and SQL pushdown for high-performance transformation. It automates data warehouse and data mart creation through model-driven patterns. This ensures analytics-ready data is produced consistently and at speed.

5. Data Quality

Built-in profiling, cleansing, and validation ensures data is accurate and trustworthy throughout its lifecycle. Talend Trust Score™ delivers automated, transparent quality assessment at the dataset level. Enterprise-grade data quality services provide consistent standards across every domain. Qlik Talend Cloud provides advanced stewardship tools for reviewing, correcting, and certifying data, rules management, enrichment, and monitoring.

6. Data Governance

The platform centralises metadata, lineage, and policy management to enforce consistent governance. Role-based access control and auditability ensuring data is used safely and responsibly. This provides full visibility across ingestion, transformation, and consumption.

7. Open Lakehouses

Qlik Talend Cloud integrates effortlessly with Snowflake, Databricks, BigQuery, Microsoft Fabric, and open standards like Apache Iceberg. Pipelines are built to be portable, allowing you to switch targets or migrate between platforms with minimal rework. This future-proofs your architecture, avoids lock-in, and ensures data remains usable across generations of technology.

8. Integrations & Connectors

The platform includes native connectivity to 150+ sources and targets, covering SaaS apps, databases, cloud platforms, APIs, and file systems. It standardises connectivity patterns and removes the need for custom connectors. This allows new systems and datasets to be onboarded with minimal friction.

9. Catalog & Linage

A centralised catalog provides searchable datasets enriched with business metadata. End-to-end lineage visualisation shows where data came from, how it was transformed, and who uses it. This gives teams confidence in the data they consume and improves transparency across the organisation.

10. Data Products

Qlik Talend Cloud enables the creation of reusable, governed data assets, including curated datasets, marts, and well-defined structures ready for analytics and operational use. These reusable assets promote consistency across teams and reduce duplication by turning reliable data into shareable, enterprise-ready products.

Modern Integration Across Every Cloud and Data Platform

THE BENEFITS OF QLIK TALEND CLOUD REALISED ACROSS YOUR ENTIRE DATA ESTATE

Qlik Talend Cloud is designed for the complexity of today’s data architectures where analytics, AI, and operations rely on dozens of systems running across multiple clouds. QTC boasts native support for Snowflake, Databricks, BigQuery, Azure, AWS, Microsoft Fabric, SAP, Salesforce, and more. It sits at the centre of your data ecosystem and brings consistency to every stage of the data journey.

By combining real-time streaming, powerful transformation, enterprise data quality, governance, and open lakehouse support, the platform creates a reliable, future-ready foundation for insight and automation. It can simplify complex data estates, reduce operational friction, and ensure that data remains accurate, accountable, and aligned to business needs.

Whether modernising legacy integrations, adopting new cloud platforms, or preparing for AI-driven workloads, Qlik Talend Cloud gives organisations the flexibility to evolve without rework or lock-in. It replaces fragmented tooling with a single, governed environment that keeps data flowing cleanly from source to insight.

Climber – A Qlik Elite Partner

We have enjoyed developing Qlik solutions for hundreds of companies across various sectors since 2007. As one of few European companies, we are proud to be a Qlik Elite Partner both within Qlik Data Analytics and Qlik Data Integration.

Our team brings broad expertise, backed by multiple Qlik certifications, to help you succeed every step of the way.

Learn more about how we have assisted other companies with their Qlik Data Integration solutions here.

Turn your data challenges into measurable outcomes

Whether you’re exploring Qlik Talend Data Integration for the first time or looking to accelerate an existing project, our team is here to help.

Contact us today!